Protocol Diversity Is Not the Problem.

Fragmented Processing Is.

“Smart infrastructure projects do not fail because the wrong sensor protocol was selected.”

That is the easier explanation, and often the most comfortable one. A project runs into trouble, data becomes inconsistent, integrations slow down, reports need correction, and eventually someone points at the device layer. Too many vendors. Too many protocols. Too many gateways. Too many historical decisions.

There is some truth in that. Real infrastructure is rarely clean. Buildings are not replaced as a single unit. Cities do not modernize one district at a time with perfect technical consistency. Submetering companies inherit installed bases, customer requirements, regional habits, supplier relationships, and procurement decisions that were made years earlier.

So yes, protocol diversity is real; but it is not the core problem.

The deeper issue begins when every protocol, vendor, or integration path brings its own processing logic with it. One system receives the message. Another validates it. Another stores it. Another corrects it. Another exports it. Another prepares it for billing. Over time, the company no longer operates a telemetry platform. It operates a collection of partial interpretations.

That distinction matters.

A multi-protocol environment can still be orderly. A fragmented processing environment rarely stays that way.

At small scale, fragmentation can remain hidden. A missing value is corrected manually. A gateway issue is explained by someone who knows the installation history. A billing export is checked before it leaves the company. A support ticket is solved because one experienced employee remembers how that specific vendor formats its data.

This feels operationally normal because the organization has adapted around it; but adaptation is not architecture.

As the number of devices grows, these small reconciliations begin to define the business. Not officially. No one writes a strategy document called “manual interpretation layer.” It simply appears. A few spreadsheets. A few scripts. A few special cases in the backend. A few customer-specific rules. A few people who must be consulted before certain reports are trusted.

Then the company hires more people, adds more monitoring, introduces more internal checks, and calls it maturity.

Sometimes it is maturity.

Sometimes it is the cost of not having one coherent processing model.

This is where leadership needs to be careful. The visible complexity is usually at the edge: meters, gateways, radio conditions, tenant changes, installation quality. That is what everyone can see. The less visible complexity sits behind it, where incoming data is interpreted and turned into something the business can rely on.

That layer determines whether the organization has one version of operational truth or several competing versions that must be reconciled later.

In regulated markets, this is not a technical detail. It affects billing confidence, audit readiness, customer trust, and internal cost. If one system believes a device is active, another marks it silent, and a third contains corrected historical values, the question is no longer only “Which value is right?” The better question is: why can more than one answer exist?

Protocol diversity does not automatically create that condition.

Fragmented processing does.

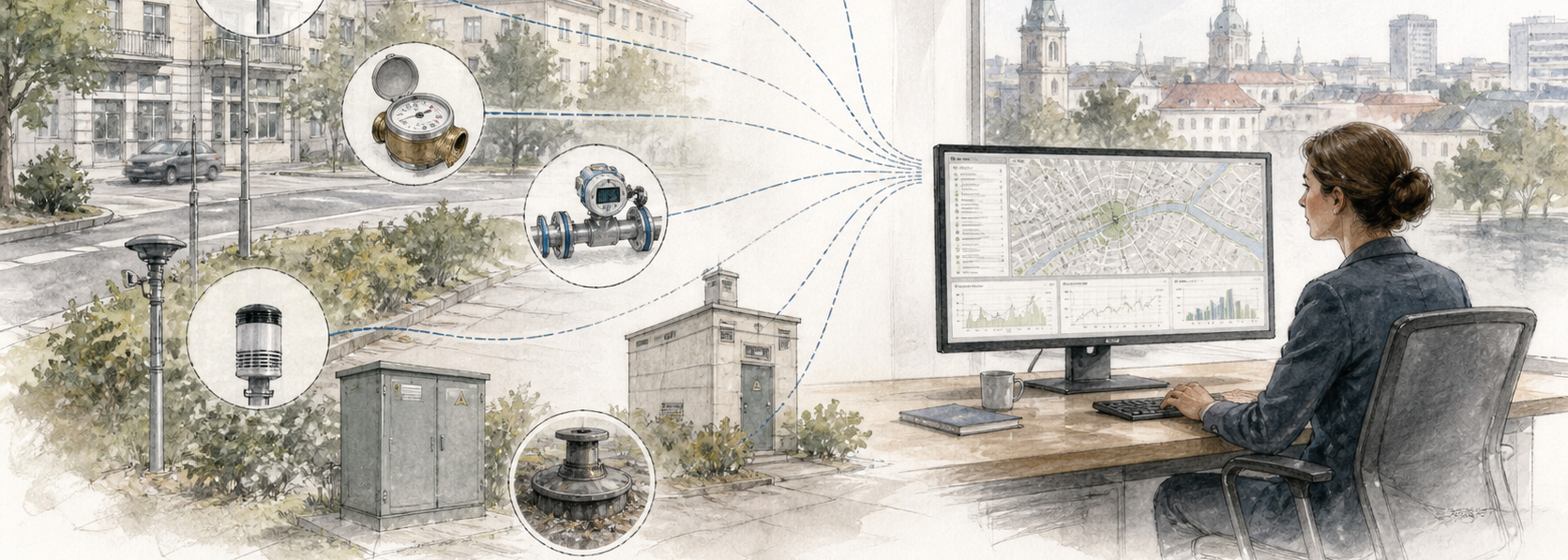

This becomes more important as smart city and submetering projects expand beyond narrow use cases. A city may begin with air quality sensors, then add water monitoring, energy consumption, traffic flows, public buildings, environmental reporting, and district-level infrastructure data. A metering company may begin with heat and water, then add remote reading, tenant portals, ESG reporting, billing integrations, and partner APIs.

None of these steps is unreasonable.

The problem appears when each new requirement is added as another vertical slice. Another pipeline. Another storage habit. Another interpretation rule. Another place where data becomes “almost normalized” but not quite.

That is how infrastructure becomes difficult to reason about.

Not because the people are careless. Usually the opposite is true. The people are working hard to keep the system usable. But the more effort required to keep data aligned, the more the company should question whether the processing layer is carrying the correct shape of responsibility.

A serious telemetry environment should accept protocol diversity at the edge while reducing interpretation diversity at the center.

That does not mean every device must behave the same way. It means the business should not be forced to understand every device through a separate operational lens. Once data enters the processing domain, the organization needs consistency: identity, state, timing, validation, access, retention, and reporting logic should not be reinvented for every incoming path.

This is not a preference for elegance. It is a condition for scale.

The companies that understand this will make a quiet shift. They will stop treating protocol support as the main measure of platform readiness. Supporting many protocols is useful, but it is not enough. The more important question is what happens after the message arrives.

Is it interpreted once, according to a coherent model? Or does it begin a journey through exceptions? The answer determines the future cost of growth.

A platform can look flexible because it accepts many inputs. But if every input increases downstream complexity, that flexibility is expensive. It may still work. It may even work for years. But eventually the organization starts paying for the same architectural decision through support effort, onboarding time, reporting caution, and dependency on internal knowledge.

This is why protocol diversity should be treated as a normal condition of the market, not as a failure to standardize.

Buildings will remain mixed. Cities will remain mixed. Installed bases will remain mixed. Procurement will remain imperfect. That is reality.

The strategic question is whether the processing layer turns that reality into a unified operational view, or whether it preserves the fragmentation and passes the burden forward.

At the edge, diversity is unavoidable.

At the center, fragmentation is optional.

And for any company planning to scale beyond its current comfort zone, that difference may become one of the most important architectural decisions it never formally made.