How KRONYX Works: From Sensor Registration to Live Data Delivery

Organizations deploying sensors, whether for submetering, logistics, environmental monitoring, or municipal infrastructure, all face the same structural question:

How does telemetry move from field hardware into a reliable, compliant, and scalable processing environment without creating long-term operational burden?

KRONYX is built to answer that question from the customer’s perspective. Regardless of whether data arrives through gateways, direct API uploads, or hybrid deployments, the operational model remains consistent.

This article explains how customers register devices, on-board deployments, and access data, without requiring them to operate a telemetry processor internally.

1. Account Initialization and Access Structure

Each customer operates within a dedicated account namespace.

Access is role-based and scoped, ensuring that users only interact with their assigned deployments. Data is logically segregated per account. There is no cross-tenant visibility.

KRONYX operates strictly as a processor. The customer remains the data controller.

All processing and storage are handled within the European Union, aligned with GDPR processor obligations and EU data residency requirements.

This separation ensures that customers retain ownership and control over their business logic, billing systems, and client relationships.

2. Bulk On-boarding with Structured CSV Upload (DataBoss Model)

Large deployments require structured on-boarding.

Rather than manually registering hundreds or thousands of devices, customers upload a structured CSV file using the KRONYX on-boarding interface.

The CSV includes:

- Gateway identifiers

- Sensor identifiers

- Protocol type

- Optional metadata (building reference, unit reference, asset group, tenant pseudonym)

- Deployment mapping

Once uploaded:

- The system validates entries

- Credentials are automatically generated for each gateway and sensor

- Unique authentication tokens are assigned where required

- Records are enriched with system-generated metadata

The return file is the same CSV, now updated with assigned credentials and internal identifiers.

This approach allows operators to manage on-boarding at scale while maintaining deterministic device identity.

Two credential strategies are supported:

1. Per-Device Credentials

Each gateway or sensor receives individual authentication credentials.

This enables granular performance logging, device-level traceability, and detailed diagnostics.

2. Account-Level Credentials

A shared authentication model simplifies deployment and operational management.

Logging becomes less granular but administration is streamlined.

Customers choose the model based on operational preference.

Bulk ingestion capabilities are designed specifically for high-volume environments.

3. Gateway-Based vs Direct Sensor Deployments

KRONYX supports multiple ingestion patterns, but operational behavior remains consistent.

Gateway-Based Deployments

Customers register gateways via CSV onboarding.

Gateways authenticate using assigned credentials and begin forwarding telemetry messages. Gateway performance, availability, and message flow can be monitored depending on credential strategy.

Direct Sensor-to-API Deployments

For devices capable of secure direct uplink:

- Sensors authenticate against assigned ingestion endpoints

- Messages are submitted through secure API requests

- No intermediary gateway is required

Regardless of ingestion path, device records are updated and data is stored identically downstream.

The ingestion method does not change how data is processed or delivered.

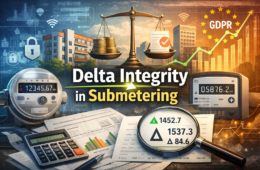

4. What Happens When Data Arrives

When a sensor or gateway submits telemetry:

- The associated device record is updated

- The incoming data is stored

- Configured alert logic evaluates thresholds

- Subscribed systems receive updates immediately

From the customer’s perspective, the behavior is simple:

Data arrives.

Records are updated.

Live subscribers are notified.

Historical data remains queryable.

There is no requirement for the customer to manage queues, brokers, or scaling mechanics.

5. Live Delivery and Data Access Models

KRONYX operates API-first. There is no mandatory frontend layer.

Customers access data through three primary models:

1. Live Streaming (Push Model)

For systems that require immediate updates:

- WebSocket feeds provide real-time message streams

- MQTT forwarding delivers tenant-isolated telemetry

- Remote systems can receive event-triggered payloads

All live subscribers receive updates as data is processed.

This enables:

- Billing system synchronization

- ERP integration

- Monitoring dashboards

- External alerting engines

Live delivery does not require polling.

2. On-Demand Retrieval (Pull Model)

Customers may query:

- Current device values

- Historical time ranges

- Consumption deltas

- Gateway performance records

All endpoints are accessible via REST APIs.

This model is suitable for:

- Periodic billing generation

- Audit extraction

- Compliance verification

- Internal analytics

- Location based tracking

- Temperature or environmental monitoring and alerting

3. Custom UI Deployment

KRONYX does not impose a frontend. (Coming soon)

Customers may:

- Build proprietary dashboards

- Integrate into existing applications

- Develop mobile or web interfaces

- Use internal BI tools

There are no licensing restrictions tied to building on top of the API layer.

Processing remains externalized while presentation remains fully under customer control.

6. Reporting and Regulatory Alignment

KRONYX supports structured reporting workflows including:

- Delta-based consumption reports

- Time-range exports

- CSV extraction

- Cold archive retrieval where required

Data retention policies are configurable and aligned with regulatory expectations.

Controller access to logs and audit trails is maintained.

Sensitive identifiers are pseudonymized or encrypted in accordance with GDPR processor responsibilities.

Compliance is structural, not procedural.

7. Operational Segmentation by Organization Size

While the on-boarding and delivery model is identical for all customers, the strategic benefit differs by scale.

High-Volume Operators (100,000+ Sensors)

For organizations operating at national or regional scale:

- Telemetry growth does not require internal platform expansion

- No DevOps scaling curve tied to device count

- No infrastructure duplication across regions

- Gateway identity and logging remain structured and auditable

- Data residency remains within EU jurisdiction

Field operations continue unchanged.

Processing complexity is externalized without surrendering control.

Growth becomes operational not infrastructural.

Small and Mid-Sized Operators

For smaller deployments:

- No need to design or maintain a telemetry backend

- No broker-heavy stack

- No infrastructure monitoring overhead

- No compliance architecture buildout

- Predictable per-device processing economics

Organizations can deploy wireless sensors without becoming a platform engineering company.

Processing becomes a service layer not a permanent internal burden.

8. What KRONYX Does Not Replace

KRONYX does not replace:

- Installation teams

- Customer relationships

- Billing engines

- CRM platforms

- Branding

It replaces only one responsibility:

Operating a high-frequency telemetry processing layer internally.

Customers retain sovereignty over business operations while delegating the technical discipline of ingestion, storage, and structured delivery.

9. Strategic Perspective

In many sensor deployments, three disciplines coexist:

- Field installation

- Customer service and billing

- Telemetry processing infrastructure

The first two are business differentiators.

The third is an infrastructure specialization.

KRONYX allows organizations to separate these roles cleanly.

Sensors can scale.

Gateways can expand.

Contracts can grow.

Without turning backend processing into an organizational constraint.

Telemetry growth introduces an architectural decision that many organizations postpone.

Installing meters, supplying hardware, and managing customer relationships are established disciplines. They are operational, contractual, and service-oriented.

Operating a large-scale digital processing layer is a different discipline entirely. It involves secure ingestion, credential management, developer operations, audit alignment, and infrastructure scaling responsibilities typically associated with cloud service providers.

For manufacturers, meter suppliers, and service operators, the strategic question is no longer purely technical. It is structural.

Are we a hardware and service organization that leverages digital processing or are we becoming a cloud infrastructure operator by necessity?

Not every organization needs to specialize in both.

The distinction matters most when scale begins to accelerate.